Scaling compute and data reliably drives down next-token prediction loss. This relationship is well-established and almost physics-like in its predictability.

However, mapping that mathematically predictable loss to real-world capabilities is far noisier. Most scaling laws tell us how well a model will predict the next word — not whether it can write exploit code, synthesize a novel protein, or pass a bar exam. The relationship between loss and these real-world capabilities is poorly understood, highly task-dependent, and subjective in its modeling.

Because we can’t cleanly predict capabilities from loss, we need an empirical science of AI evaluations. In this post, I’ll walk through some naive attempts at chaining scaling laws to predict real-world performance, and show why these predictions are far less reliable than many claim.

Scaling laws aren’t as predictive as you think

Many claim that if you look at these scaling laws and extrapolate, it’s clear that we’ll see massive gains by simply scaling up existing models. Scaling laws are quantitative relationships that relate model inputs (data and compute) to model outputs (how well the model predicts the next word). They’re created by plotting model inputs and outputs at various levels on a graph.

But using scaling laws to make predictions isn’t as easy as people claim. To begin with, most scaling laws (Kaplan et al, Chinchilla, and Llama) output how well models predict the next word in a dataset, not how well models perform at real-world tasks. This 2023 blog post by a popular OpenAI researcher describes how “it’s currently an open question if surrogate metrics [like loss] could predict emergence … but this relationship has not been well-studied enough …”

Chaining two approximations together to make predictions

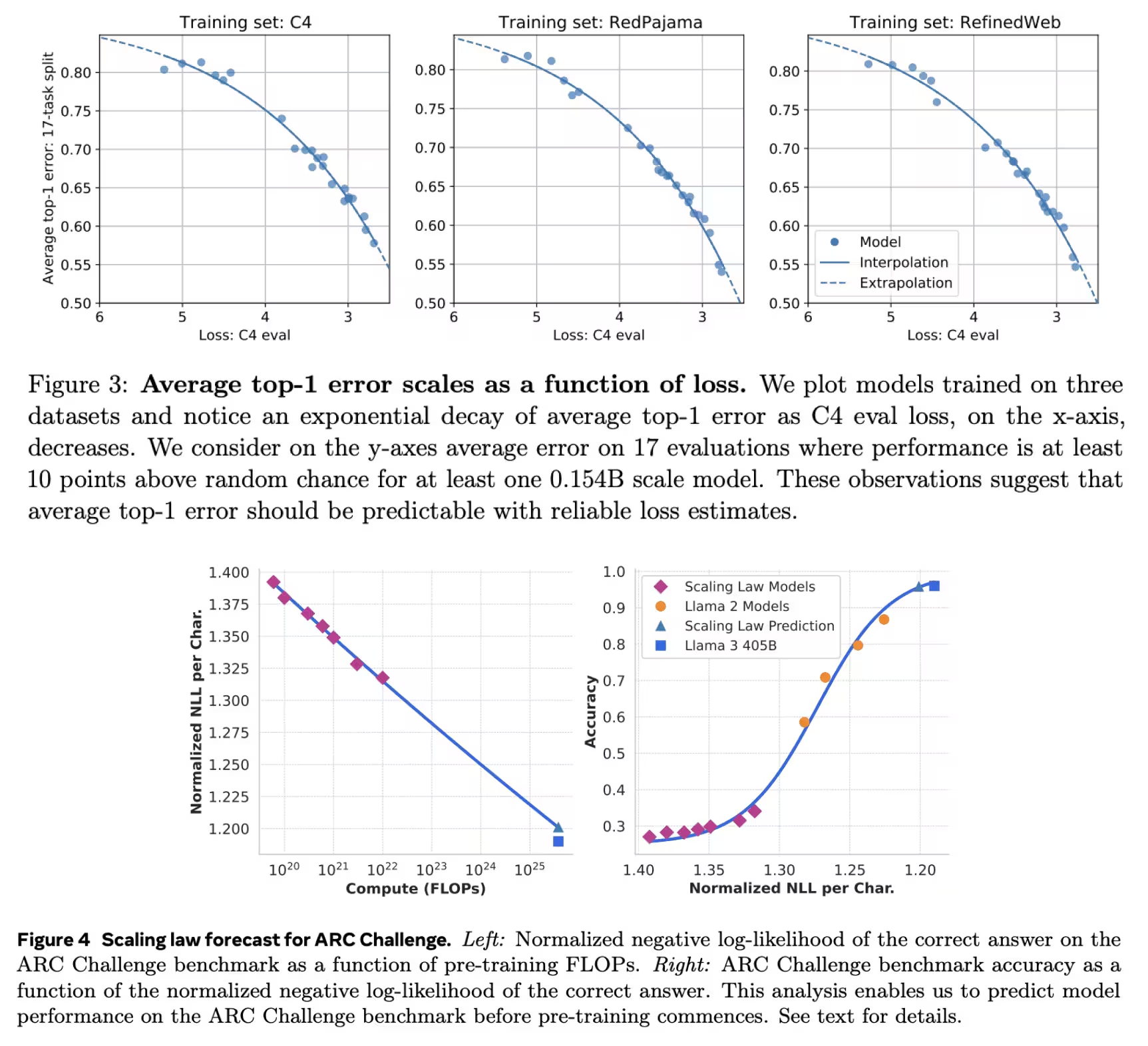

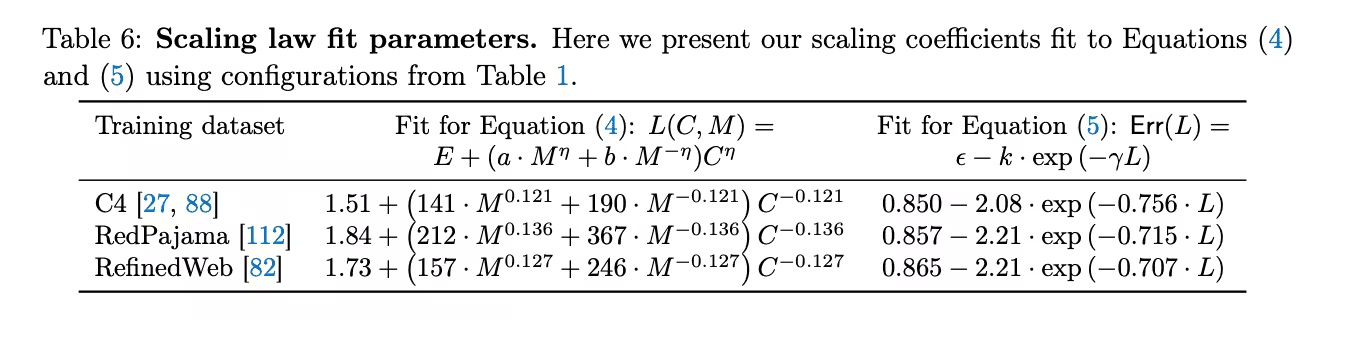

To fix the above issue, you can fit a second scaling law that quantitatively relates upstream loss to real-world task performance, and then chain the two scaling laws together to make predictions of real-world tasks.

Loss = f(data, compute)

Real world task performance = g(loss)

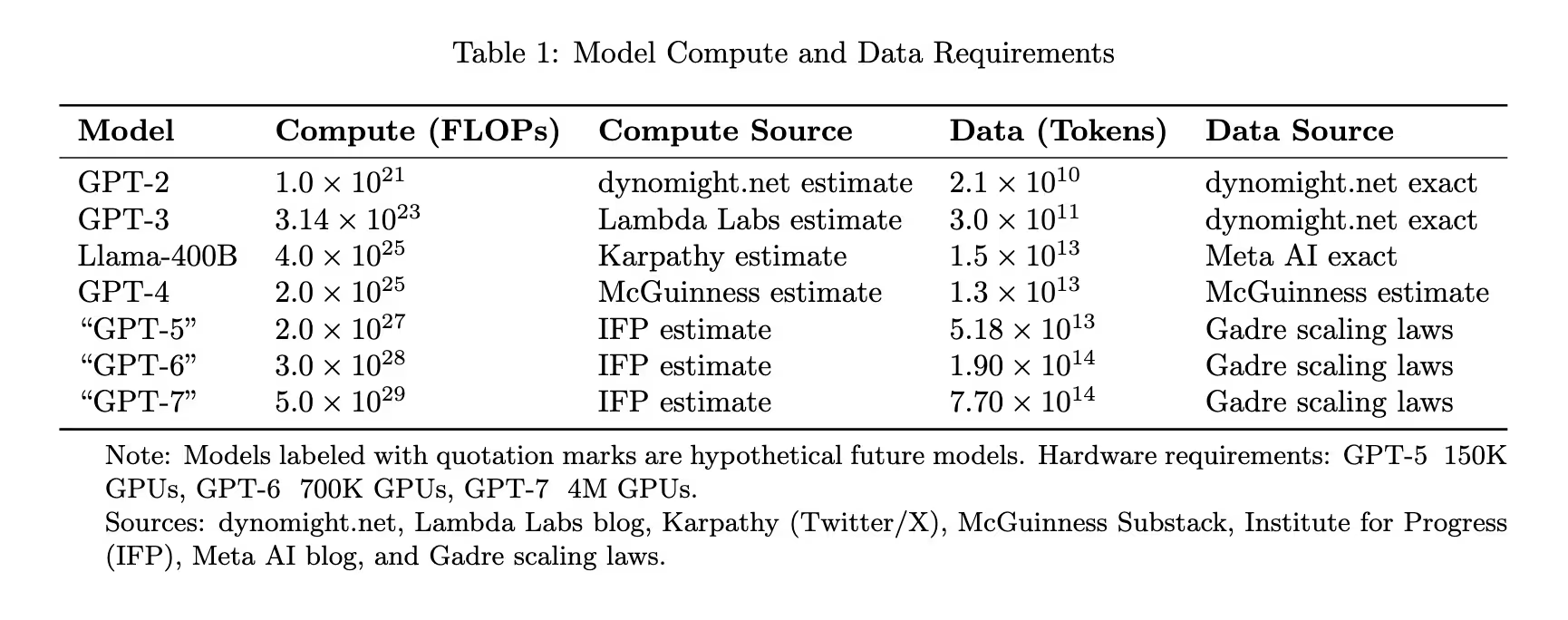

Real world task performance = g(f(data, compute))In 2024, Gadre et al and Dubet et al introduced scaling laws of that sort. Dubet uses this method of chained laws for prediction and claims that its predictive ability “extrapolates [well] over four orders of magnitude” for the Llama 3 models.

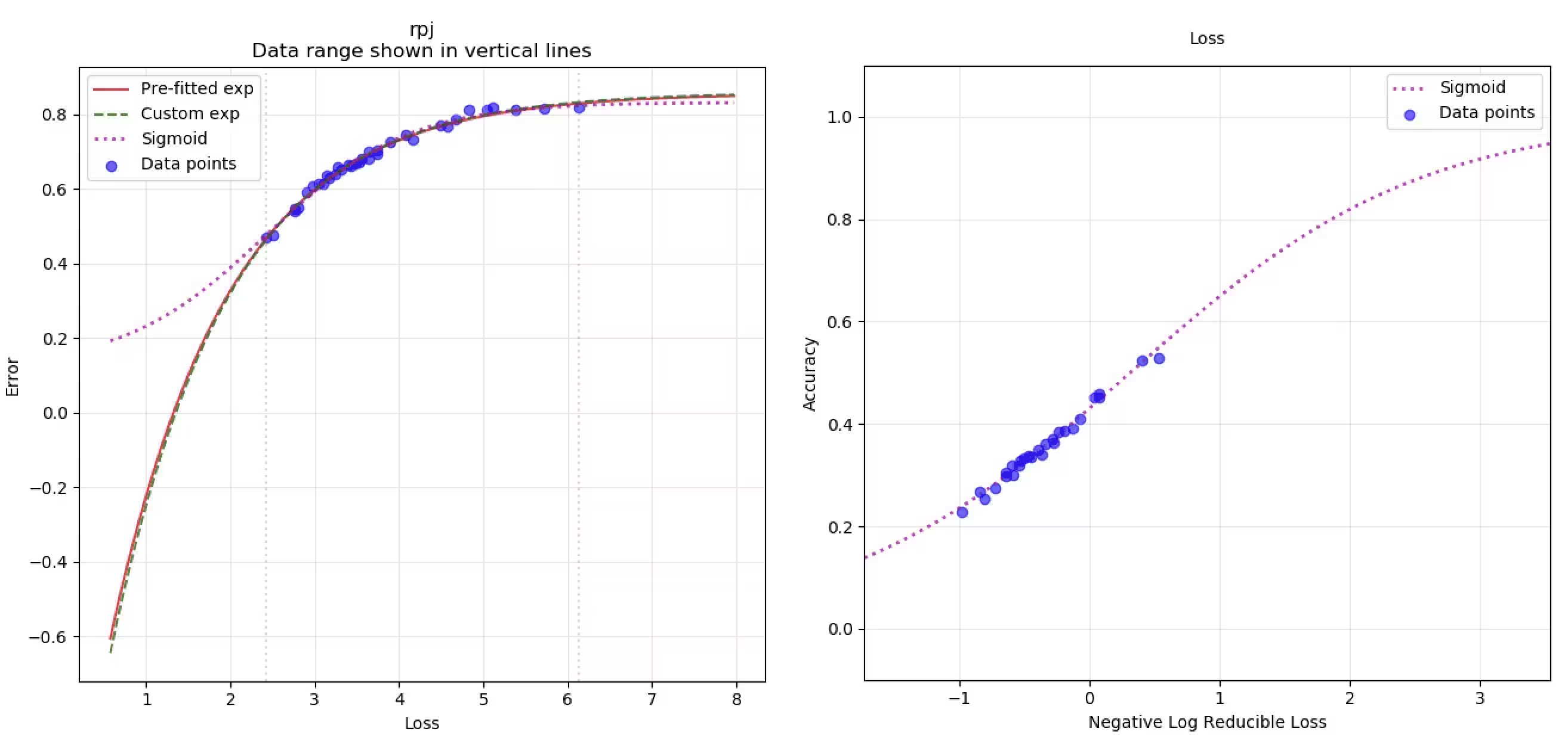

However, work on these second scaling laws is nascent, and with so few data points, choosing a fitting function becomes a highly subjective judgment call. For example, in the figures below, Gadre assumes an exponential relationship across an average of many tasks (top) across many tasks, and Dubet assumes a sigmoidal relationship for a single task (bottom) for the ARC-AGI task. These scaling laws are also highly task-dependent (see Mosaic).

Without a strong hypothesis for the relationship between loss and real-world task accuracy, we don’t have a strong hypothesis for the capabilities of future models.

A shoddy attempt at using chained scaling laws for predictions

What would happen if we were to use some of these naively chained scaling laws to perform predictions anyways? Note the goal here is to show how one can use some set of scaling laws (Gadre) to obtain a prediction, rather than obtain a detailed prediction itself.1

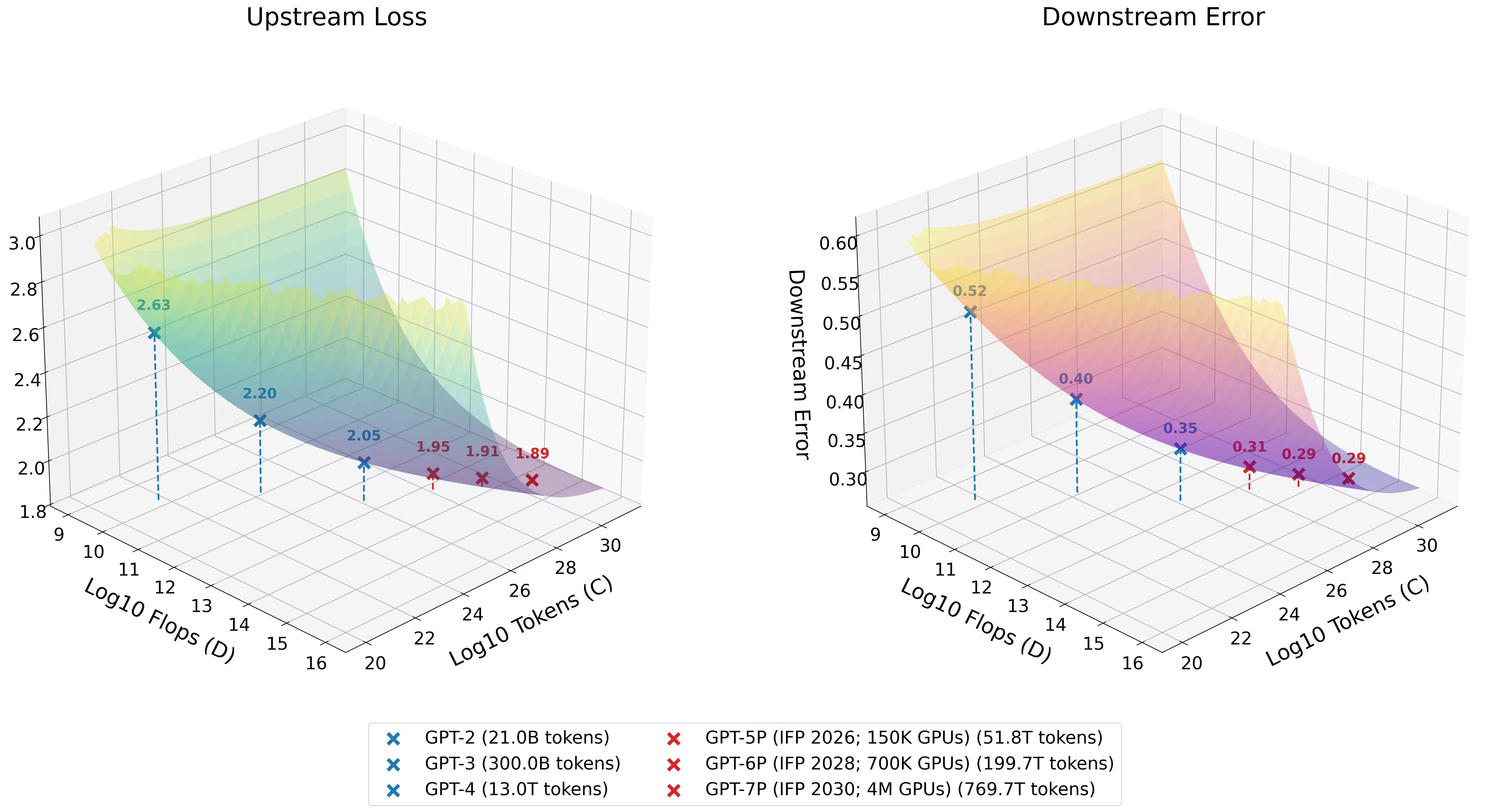

To start, we can use publicly available information to estimate the data and compute inputs2 for the next few model releases. We can take announcements of the largest data center build-outs, estimate the expected resulting compute based on their GPU capacity, and map them onto each successive model generation.3

Then, we can use scaling laws to estimate the amount of data these clusters would need. The largest cluster estimate from the Institute for Progress would ideally train on 770T tokens to minimize loss according to the scaling laws we’re using—a few times larger than the size of the indexed web.4 That seems challenging but feasible, so let’s just use that for now.

See link to Google Sheet of data and compute estimates5

See link to Google Sheet of data and compute estimates5

Finally, we can plug these inputs into chained scaling laws and extrapolate. The right plot is what we’re interested in. It shows real-world task performance on the vertical axis, plotted against the inputs of data and compute on the horizontal axes. Blue points represent performance on existing models (GPT-2, GPT-3, etc), while red points are projections for the next scale-ups (GPT-5, GPT-6, GPT-7, etc):

See link to Github repository for chained Gadre scaling laws and resulting plots

See link to Github repository for chained Gadre scaling laws and resulting plots

Using these scaling laws, the predicted improvement in real-world tasks from GPT-4 to a GPT-7 style model (~25000X more compute) would be around the same magnitude as the improvement from GPT-3 to GPT-4 (~100X more compute). But the takeaway isn’t that scaling has hit a wall — it’s that these public, naive scaling laws fail to capture reality. They’re fit on open datasets and small models, and don’t account for frontier lab advantages: algorithmic efficiency gains, synthetic data, RLHF, and other post-training techniques. The predicted stagnation likely reflects the limits of public visibility into frontier scaling, not the limits of scaling itself.

Are we getting close to the irreducible loss?

If you look at the left plot, these scaling laws suggest we’re approaching the irreducible loss on this particular dataset.6 The irreducible loss is closely related to the entropy of the dataset, and represents the theoretical best performance a model can reach on that dataset. With Gadre scaling laws on RedPajama, the best possible model can only reach an irreducible loss of ~1.84, and GPT-4 is already estimated at ~2.05.7

But this is the irreducible loss for one dataset under one set of scaling laws. Frontier labs train on different data mixtures, use synthetic data, and apply post-training techniques that these public scaling laws don’t capture. Most labs have also stopped releasing loss values for their frontier training runs, so we don’t actually know how close they are to any irreducible loss — or whether the relevant loss landscape looks anything like this one.

Subjectivity in fitting functions and the limits of our data

As mentioned previously, the choice of fitting function on the second scaling law is highly subjective. For example, we could refit the loss and performance points from the Gadre paper using a sigmoidal function instead of an exponential one:

See link to Github repository for sigmoidal fit on Gadre data and resulting plots

See link to Github repository for sigmoidal fit on Gadre data and resulting plots

Yet, the conclusion remains largely unchanged. Comparing the exponential fit (red line) with our custom sigmoidal fit (dotted purple line) in the left graph, the limitation is clear: we simply don’t have enough data points to confidently determine the best fitting function for relating loss to real-world performance.

No one actually knows how strong the next models will be

There are obviously many ways to improve the above “prediction”: using better scaling laws, using better estimates of data and compute, and so on.8 The point of the above exercise is more to show the amount of uncertainty baked into these predictions than to perform an accurate prediction.

Ultimately, scaling laws are noisy approximations, and with this chained method of prediction, we’re coupling two noisy approximations together. When you consider that the next models may have entirely different scaling laws, as a result of different architectures or data mixes, no one really knows the capabilities of the next few model scale-ups.

We need empirical evaluations, not extrapolations

Extrapolating scaling laws is far less straightforward than many claim. The first scaling law — loss as a function of data and compute — is relatively predictable. But the second — real-world capabilities as a function of loss — is subjective, noisy, and poorly constrained by data.

This means we cannot predict exactly when specific capabilities will emerge just by looking at a loss curve. We can’t look at a scaling law and say “at this many FLOPs, the model will be able to conduct autonomous cyber-offense” or “at this loss level, it will be able to synthesize novel pathogens.” The mapping from loss to dangerous capabilities is too noisy for that kind of precision.

What we need instead is an empirical science of AI evaluations — rigorous, task-specific benchmarks that directly measure the capabilities we care about, rather than relying on extrapolations from surrogate metrics.

Thank you to Celine, Matthew, Jasmine, Coen, Shreyan, Nikhil, Trevor, Namanh, and Gabe for their invaluable feedback; Justin for his help with scaling laws; and Bela, Susan, and Maxwell for ‘encouragement.’

November 30, 2024 update: I changed the data and compute estimates to IFP estimates after Miles’ note instead of using my own compute estimates.

Title update: this post was originally titled “Will We Have AGI?” The framing felt outdated, so I renamed it to “Scaling Laws Aren’t as Predictive as You Think,” which better reflects the actual content.

Notes

-

There are many potential issues with this approach, including the choice of tasks, the assumed function for modeling loss to error, the number of data points used for the fit, not considering the different quality of training tokens etc. ↩

-

“I disagree with your methods on predicting the inputs (data and compute) for the next few model releases. You’re massively underestimating or overestimating the compute we’ll get from data center build-outs, distributed training, and GPU improvements, as well as the data we’ll get from leveraging multi-modal data and synthetic data. Why don’t you replot with those considerations?” With Gadre’s scaling laws, the predicted performance stagnates regardless — but this likely reflects limitations of these particular public scaling laws rather than a fundamental ceiling. ↩

-

Notably, we’re not taking into account potential advances such as cross-data center training ↩

-

I’m using the simplifying assumption that total GPU flops = effective FLOPs (which is an overestimate because clusters will incur communication overhead) ↩

-

I don’t include Llama-400B model in later graphs but include it here for the sake of comparison to GPT-4 input estimates ↩

-

“I don’t understand the irreducible error in Gadre’s scaling laws. Why is the irreducible loss for the C4 dataset lower than the irreducible loss for the RedPajama dataset, when the C4 dataset is a subset of the RedPajama dataset?” Scaling laws fit on a small number of data points are rough approximations. As mentioned above, I think the method we have has several issues, and my larger point is that publicly available scaling laws aren’t trustworthy for predictions multiple orders of magnitude away from their fitted points. ↩

-

It’s possible that the last few gains in loss will lead to extraordinary outcomes (e.g being able to predict every single word is very different than being able to predict 99% of words) but this relates to the point of us not really knowing how to model the relationship between loss and capabilities in a generic way ↩

-

Potential blog: What should you do if you believe in AGI? ↩

Subscribe to Kevin Niechen's blog

Notes and essays on applied AI, venture investing, and building companies. Get new posts in your inbox via Substack, or use RSS.